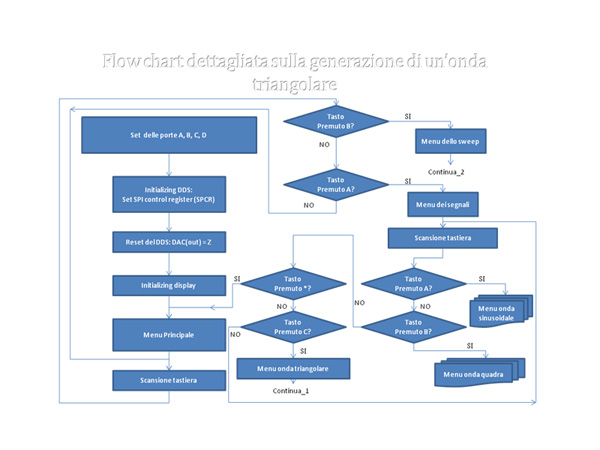

% ffmpeg -i /Volumes/6TB_RAID5/ffmpeg_3DLUT/Source_LOGC_Alexa_trim.mov -s 1920x1080 -vf lut3d="/Volumes/6TB_RAID5/ffmpeg_3DLUT/ArriAlexaLogCtoRec709.dat" -vcodec prores_ks -pix_fmt yuv422p /Volumes/6TB_RAID5/ffmpeg_3DLUT/Alexa_LogC_LUT_converted_ffmpeg.movįfmpeg version 2.0 Copyright (c) 2000-2013 the FFmpeg developersīuilt on 10:30:44 with llvm-gcc 4.2.1 (LLVM build 2336.11.00)Ĭonfiguration: -enable-gpl -enable-version3 -enable-nonfree -enable-static -disable-shared -enable-postproc -enable-libass -enable-libcelt -enable-libfaac -enable-libfdk-aac -enable-libfreetype -enable-libmp3lame -enable-libopencore-amrnb -enable-libopencore-amrwb -enable-openssl -enable-libtheora -enable-libvo-aacenc -enable-libvorbis -enable-libvpx -enable-libx264 -enable-libxvid Take a LOG-C Alexa Quicktime Prores 4444 file and convert it. I can upload sample files to your ftp where you can try yourself.

The Gamma seems to be ok but the colors seems to be incorrect. LUT and one which was downloaded from the ARRI LUT Generator. The result differs a lot with a file which I converted via Davinci Resolve built in LOG-C to REC709 I'm working a lot with ARRI Alexa LOG-C footage and tried to convert it with the new 3d_lut filter. Replace the trilinear interpolation with torch.nn.id_sample. While being highly efficient, our model also outperforms the state-of-the-art photo enhancement methods by a large margin in terms of PSNR, SSIM and a color difference metric on two publically available benchmark datasets. Our model contains less than 600K parameters and takes less than 2 ms to process an image of 4K resolution using one Titan RTX GPU. The small CNN works on the down-sampled version of the input image to predict content-dependent weights to fuse the multiple basis 3D LUTs into an image-adaptive one, which is employed to transform the color and tone of source images efficiently. We learn multiple basis 3D LUTs and a small convolutional neural network (CNN) simultaneously in an end-to-end manner. More importantly, our learned 3D LUT is image-adaptive for flexible photo enhancement. We, for the first time to our best knowledge, propose to learn 3D LUTs from annotated data using pairwise or unpaired learning. 3D LUTs are widely used for manipulating color and tone of photos, but they are usually manually tuned and fixed in camera imaging pipeline or photo editing tools. In this paper, we learn image-adaptive 3-dimensional lookup tables (3D LUTs) to achieve fast and robust photo enhancement. However, many existing photo enhancement methods either deliver unsatisfactory results or consume too much computational and memory resources, hindering their application to high-resolution images (usually with more than 12 megapixels) in practice. Recent years have witnessed the increasing popularity of learning based methods to enhance the color and tone of photos.

This can significantly speedup the training stage without loading the very heavy high-resolution images. To obtain the entire full-resolution images, it is recommended to convert from the original FiveK dataset.Ī model trained on the 480p resolution can be directly applied to images of 4K (or higher) resolution without performance drop. I also provided 10 full-resolution images for testing speed. Here I only provided the FiveK dataset resized into 480p resolution (including 8-bit sRGB, 16-bit XYZ inputs and 8-bit sRGB targets). The whole datasets used in the paper are over 300G. Learning Image-adaptive 3D Lookup Tables for High Performance Photo Enhancement in Real-time Downloads Paper, Supplementary, Datasets(,)

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed